It quickly turned out that our exploit doesn’t work anymore! The reason: Vista RC2 now blocks write-access to raw disk sectors for user mode applications, even if they are executed with elevated administrative rights.

It quickly turned out that our exploit doesn’t work anymore! The reason: Vista RC2 now blocks write-access to raw disk sectors for user mode applications, even if they are executed with elevated administrative rights.In my Subverting Vista Kernel speech, which I gave at several major conferences over the past few months, I discussed three possible solutions to mitigate the pagefile attack. Just to remind you, the solutions mentioned were the following:

1. Block raw disk access from usermode.

2. Encrypt pagefile (alternatively, use hashing to ensure the integrity of paged out pages, as it was suggested by Elad Efrat from NetBSD).

3. Disable kernel mode paging (sacrificing probably around 80MB of memory in the worst case).

And I also made a clear statement that solution #1 is actually something which is a bad idea. I explained that if MS decided to disable write-access to raw disk sectors from usermode, not only that might cause some incompatibility problems (think about all those disk editors, un-deleters, etc…), but also that would not be a real solution to the problem…

Imagine a company wanting to release e.g. a disk editor. Now, with the blocked write access to raw disk sectors from usermode, the company would have to provide their own custom, but 100% legal, kernel driver for allowing their, again 100% legal, application (disk editor), to access those disk sectors, right? Of course, the disk editor's auxiliary driver would have to be signed – after all it’s a legal driver, designed for legal purposes and ideally having neither implementation nor design bugs! But, on the other hand, there is nothing which could stop an attacker from “borrowing” such a signed driver and using it to perform the pagefile attack. The point here is, again, there is no bug in the driver, so there is no reason for revoking a signature of the driver. Even if we discovered that such driver is actually used by some people to conduct the attack!

But it seems that MS actually decided to ignore those suggestions and implemented the easiest solution, ignoring the fact that it really doesn’t solve the problem…

Actually, if we weren't such nice guys, we could develop a disk editor together with a raw-disk-access kernel driver, then sign it and post it on COSEINC's website. But we're the good guys, so I guess somebody else will have to do that instead ;)

Kernel Protection vs. Kernel Patch Protection (Patch Guard)

Another thing - lots of people confuse kernel protection (i.e. the policy for allowing only digitally signed kernel drivers to be loaded) with Kernel Patch Protection, also known as Patch Guard.

In short, pagefile attack, which I demoed at SyScan/BackHat is a way to load unsigned code into kernel, thus it’s a way to bypass Vista kernel protection. Bypassing kernel patch protection (Patch Guard) is a different story. E.g. Blue Pill, a piece of malware which abuses AMD Pacifica hardware virtualization, which I also demoed during my talk, “bypasses” PG. The word “bypass” is a little bit misleading here though, as the BP does not make any special effort to disable or bypass PG explicitly, it simply doesn’t care about PG, because it’s located above (or below, depending on where your eyes are located) the whole operating system, including PG. Yes, it’s that simple :)

Also, almost any malware of type II (see my BH Federal talk for details about this malware classification) is capable of “bypassing” PG, simply because PG is not designed to detect changes introduced by type II malware. So, e.g. deepdoor, backdoor which I demonstrated in January at BH Federal, is undetectable by PG. Again, not a big deal – it’s just that PG was not designed to detect type II malware (nor type III, like BP). So, I'm a little bit surprised to hear people talking about "how hard would it be to bypass PG...", as that is something which has been done already (and I'm not referring to Metasploit's explicit technique here) - you just need to design your malware as type II or type III and your done!

But even that all being said, I still think that PG is actually a very good idea. PG should not be thought as of a direct security feature. PG's main task is to keep legal programs from acting like popular rootkits. Keeping malware away is not it's main task. However, by ensuring that legal applications do not introduce rootkit-like tricks, PG makes it easier and more effective to create robust malware detection tools.

I spent a few years developing various rootkit detection tools and one of the biggest problems I came across was how to distinguish between a hooking introduced by a real malware and... a hooking introduced by some A/V products like personal firewalls and Host IDS/IPS programs. Many of the well known A/V products do use exactly the same hooking techniques as some popular malware, like rootkits! This is not good, not only because it may have potential impact on system stability, but, and this is the most important thing IMO, it confuses malware detection tools.

Patch Guard, the technology introduced in 64 bit versions of Windows XP and 2003 (yes, PG is not a new thing in Vista!) is a radical, but probably the only one, way to force software vendors to not use undocumented hooking in their products. Needles to say, there are other, documented ways to implement e.g. a personal firewall or an A/V monitor, without using those undocumented hooking techniques.

Just my 2 cents to the ongoing battle for Vista kernel...

29 comments:

I disagree. After all, if you e.g. consider a file system filter, there are still many ways of how you’re A/V can determine whether the file in question (the one which triggered file system hook) is “good” or “bad” – this is where the A/V can actually show off. If you consider e.g. a personal firewall, even though they all will be using e.g. an extra officially registered NDIS protocol or NDIS filter, still there might differentiate among themselves e.g. by the ability to detect and stop different covert channels.

Many people would argue then, that the deeper the hook is, the better “tamper-proof” protection it offers – that’s a myth! Hey Alex, weren’t you the one, who was claiming that once a malware is in kernel it can bypass *any* type of personal firewall, no matter how deep the hooking is located – for those who missed that should see Alex’s BH presentation about bypassing PFWs. The truth is – once malware gets into kernel, no matter what tricks the AV/HIDS/HIPS/PFW uses, it will always lose. Unless we moved our security products into ring -1, but there are other problems connected with this and this is a totally different story.

So, in my opinion, it’s not worth to agree for all this “hooking mess” in kernel for the sake of false sense of better security…

If someone wants to create a driver which offers raw disk access to user mode applications, the driver has to be signed by Microsoft, as you sayd. I haven't ever used raw disk access, so I only have a simple question: Would it be posible to block from within that driver those IOCTLs which require raw access to the pagefile? If so, can't Microsoft refuse signing those drivers which don't implement some protection mechanism against this kind of attacks? May be this is a stupid question because as I sayd I haven't ever used raw disk access and I don't know how you did to find the pagefile whithin hard drive using raw disk access (dwelling through sectors and so).

But it seems that MS actually decided to ignore those suggestions and implemented the easiest solution, ignoring the fact that it really doesn’t solve the problem…

I would like to believe that its a stop-gap measure implemented for the purpose of allowing the release of vista to go on undelayed while giving them time to develop a more realistic solution. probably not though. Its likely that pressure from third party vendors would eventually cause Microsoft to have to fix this properly anyway. Maybe you should write that disk editor just to mess with m. :)

To Zori: MS doesn’t need to sign anything – it is the ISV who is supposed to sign its kernel drivers: http://www.microsoft.com/whdc/winlogo/drvsign/kmcs_walkthrough.mspx

To Viraptor: If you read my article carefully you will notice that I used the word “seems” :) And I would love to hear from MS if they actually implemented another protection, like e.g. hashing for paged out pages…

To 90210: We’re talking about how to help creating an effective compromise detection (PG helps here by eliminating false positives). And you would like to sacrifice this in the name of what? Making it slightly harder to bypass HIDS/HIPS/PFWs? Come on, we want real security, not something which is just slightly harder to bypass!

You say that you're 'good guys' and that is respectable :).

But I think that while a product is still in Beta (I know the countdown has started but...) all exploits should be publicly released to force the software manufacturer to fix it before going to press. By not releasing a POC example, someone else will be able to write a malitious variant ONCE the product is released and installed on lots of machines worldwide.

Stephen

blocking write-access to raw disk sectors for user mode applications is the best solution because this also will block any other attacks and not only this specific pagefile attack.

"any other attacks"? like what? remember that you are (were) required to have admin rights to get (got) access to raw disk…

What do you think of "ProcessGuard" for Windows XP? Does it not already offer protections similar to some of the 'new' ones Money$oft is introducing in Vista?

Hi Joanna,

An application may write to the sectors on which a volume resides, but will need to acquire exclusive access to the volume (by locking or dismounting it) before doing so.

Otherwise, its writes can collide with writes being issued by the file system and end up corrupting the volume and possibly even destabilizing the system.

So a disk partitioning or management application should not need a kernel-mode counterpart to accomplish its tasks.

Regards

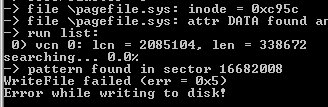

Karan, but it’s the WriteFile() function which fails (see the picture) and not the CreateFile() – so I don’t see how this could have failed because of the lock…

Hi Joanna,

To gain exclusive access to a volume, an application needs to do the following:

1. Open a handle to the volume

2. Send down either FSCTL_LOCK_VOLUME or FSCTL_DISMOUNT_VOLUME

If the above operations succeed, then any write sent down via that handle will be honored.

Regards

Hi Karan,

Correct me if I’m wrong, but that means that, starting from RC2, you can not create e.g. a disk wiping utility or something like that, which would be able to run on a main system volume, right? So, the question is – if somebody created such a tool with a necessary kernel driver, would Microsoft revoke a signature for the driver?

Hi Joanna,

The sectors that make up a volume either hold file data or file system meta-data. An application may wipe the sectors that hold file data and should use the file handle to do so. Writing to the file system meta-data directly requires ensuring that the file system itself isn’t writing to those sectors at that same moment and this can only be achieved by locking the volume.

For instance, say an application wishes to update the boot code. It would open a handle to the volume and write to sector 0. Now if the volume happens to be formatted as FAT32 and a file is being added/deleted around the same time, then the file system will want to write to sector 0 as well because that's where the dirty flag is stored. These two writes will go down in parallel and depending on the order in which they get executed, the boot code update may or may not happen.

Having said that, updating the boot code happens to be a common operation. So for application compatibility, there is no restriction on writes to the sectors of the volume where the boot code resides.

Writes to sectors outside the volumes, where the partition tables reside, are not blocked as well. So disk partitioning applications should continue to work.

If an application wishes to wipe out the entire disk, it should first delete the partitions on it before zeroing out the sectors. Otherwise, it might wipe out a sector that the file system was reading at the same time and end up destabilizing the machine. Also, the file system might write out to a sector that was just zeroed out; so when the operation completes the user assumes that the disk has been wiped clean when that isn't necessarily the case.

So it seems to me that any application that relies on writing to the sectors of a 'live' volume can have an adverse effect on system stability and has the potential for data loss. The recent change protects against this.

Regards

Hi Karan – you wrote:

“An application may wipe the sectors that hold file data and should use the file handle to do so.”

But, according to Mark Russinovich, this is not enough (http://www.sysinternals.com/Utilities/SDelete.html):

Compressed, encrypted and sparse are managed by NTFS in 16-cluster blocks. If a program writes to an existing portion of such a file NTFS allocates new space on the disk to store the new data and after the new data has been written, deallocates the clusters previously occupied by the file. NTFS takes this conservative approach for reasons related to data integrity, and in the case of compressed and sparse files, in case a new allocation is larger than what exists (the new compressed data is bigger than the old compressed data). Thus, overwriting such a file will not succeed in deleting the file's contents from the disk.

To handle these types of files SDelete relies on the defragmentation API. Using the defragmentation API SDelete can determine precisely which clusters on a disk are occupied by data belonging to compressed, sparse and encrypted files. Once SDelete knows which clusters contain the file's data, it can open the disk for raw access and overwrite those clusters.

To be fair – I haven’t checked myself whether this is still an issue with Vista… Karan?

Another example – lets forget about file wiping utilities – now we want to write a file undeleter – something like e.g. Wininternals’ FileRestore… I don’t have a full version of FileRestore, but from the trial I managed to download it seems to me that it allows for file recovery on the live system volume…

So, can we write such a undeleter for Vista RC2?

the fight agianst the VISTA kernel goes futher

Before granting access to a block device, the OS should check if it is using it (filesystems/page file). If block device is in use, access to it should be restricted.

If a block device is just being used to swapping kernel pages, trying to open it should push the page file to RAM and wipe disk.

I found that starting with build 5728, write access to the system/boot volume is locked. You can open the volume and write to the first 8K (16 sectors on a 512 byte sector drive). If you open the physical drive, you can write to the physical boot sector, and also and hidden sectors (i.e. the partition gap). Writing to any other sectors on other areas, using either a drive/volume handle, will fail. You cannot lock the system volume handle using the IOCTL mentioned above.

In other words, there is no way to access the physical sectors of the system drive that are not the partition gap, MBR, or boot sector.

My guess is that this will break existing utilities, such as the above mentioned SDelete.

I still don't understand why do you need a raw disk access. Tasks like sector editing can (and should) be done with system offline, with linux distro or windows pe. Using defragmentation API can also be limited in driver to one function like DefragmentDevice(device).

I suppose that disk editors, undeleters, disk wipers, etc., can still be implemented by being put on live CDs with some other OS, and having the user boot the live CD, no?

Was it so hard for Microsoft to create separate swap-partition or at least reserve some region on filesystem and do not allow anybody (even filesystem) to write to it ?

Working with pagefile/paging was always such a pain in file system device drivers.

I've actively used raw disk access in my work in the past for legit reasons. LiveCD does not fit in some of those scenarios as once I've to to be able both load device driver (like SATA or RAID) and verify resulting data/algorithm assumptions in application (like own one loaded in debug session in Visual Studio).

Why do we need a file wiping utility working on a live filesystem? Well, imagine this:

You are a freedom fighter in one of those countries which have problems with respecting human rights. It’s late in the evening; you’re sitting at your computer, hacking your government’s websites for fun and profit (to save the country actually), while suddenly you hear knocking to your doors and raised voices outside – you have no illusions – they just went after you… You may only wish now you had installed Truecrypt before and kept all your sensitive files on a Truecrypt’s hidden volume exploiting its cool plausible deniability feature… But you did not! So, you need a file wiping utility, but I guess what you want here is something which could be run immediately, on a running system and not something which requires rebooting the machine from a special CD. Remember, there are at your doors… So, should all those freedom fighters upgrade to Vista? ;)

The capacity to read/write physical/logical sectors has been available in Windows since it's inception. It has been used by numerous applications and utilities for useful and beneficial purposes.

What MS has done is to break a lot of programs on a lot of machines a mere month before RTM. This not only blocks support for Vista in a timeframe that independent software developers/companies are trying to meet, but it now incurs an extra cost to their implementation.

This is unfortunate for the developers of utilities and add-on applications. I foresee this "security feature" being worked around, and regardless of the next hack patch that is implemented, it will be worked around again.

Hi Joanna,

You've brought up two very interesting scenarios:

1. SDelete will no longer function correctly on the boot volume on Vista for compressed, encrypted and sparse files (it still needs to be modified to lock the volume before writing to the extents via the volume handle so as to work on non-boot volumes). However, Vista does include a mechanism (FSCTL_SET_ZERO_ON_DEALLOCATION) to help zero out that data clusters on any give file. While this may not be a "secure" erase, it does eliminate the simple case of someone trying to read the old clusters.

2. As for file un-deletion, I would presume the correct way to approach this on a live volume would be to locate the file record, identify the extents and then copy the contents over to a new file. This should continue to work.

Regards

to undelete a file, program should first prevent possible usage of deleted blocks, which is now not possible.

Otherwise data of the deleted file can be overwritten, for example, with the pagefile.

Hi Karan,

I agree with you on point #2 – it seems that we can create an on the fly un-deleter without having a raw write-access to disk sectors. The problem of possible overwrites of sectors belonging to the deleted file always exist – so, I don’t agree with an anonymous person posting above.

However, still the problem remains with creating a secure file wiping utility…

So, I thought – why doesn’t MS create a flexible API to allow for creation of usermode based file wiping utilities? You could go even further and create an API to allow for on the fly disk wiping… The important point here IMO would be to allow the application to provide a buffer which is used for wiping (overwriting).

That should pretty much solve the problem, as we agreed that file un-deleting can be done without raw write-access to the disk and that it’s still possible to implement a diskeditor, provided the volume accessed is un-mounted or locked. BTW, would be nice if MS updated SDK entry for the CreateFile() function, to let people know that volume locking or un-mounting is now necessary when opening it for a raw access…

Hi Joanna,

Updating the documentation on some of the Win32 APIs to reflect the new change is an excellent suggestion.

There does exist a mechanism (FSCTL_SET_ZERO_DATA) to zero a range of blocks that a file resides on. A variation on this that allows the user to provide the 'pattern' or 'data' to write out is another very good suggestion.

In the meanwhile, I'd recommend that the freedom fighters keep all their sensitive files on a separate volume :)

Regards

Yeah, Johanna (Trinity) rules ! And Microsoft ... still the same !

You gave them solutions and they still can't use them, so why are they paid for ? For the stress I think ...

Thanks to share your thoughts and your works, it's amazing (for me) !

Just for information: EldoS Corporation yesterday announced release of RawDisk, a disk driver that lets you use raw disk access from your user-mode application.

So blocked direct access to the disk is no longer a problem.

>>

So blocked direct access to the disk is no longer a problem.

<<

It's a problem if you don't have 500 euros to spend on a 9K sys file.

:-(

Post a Comment